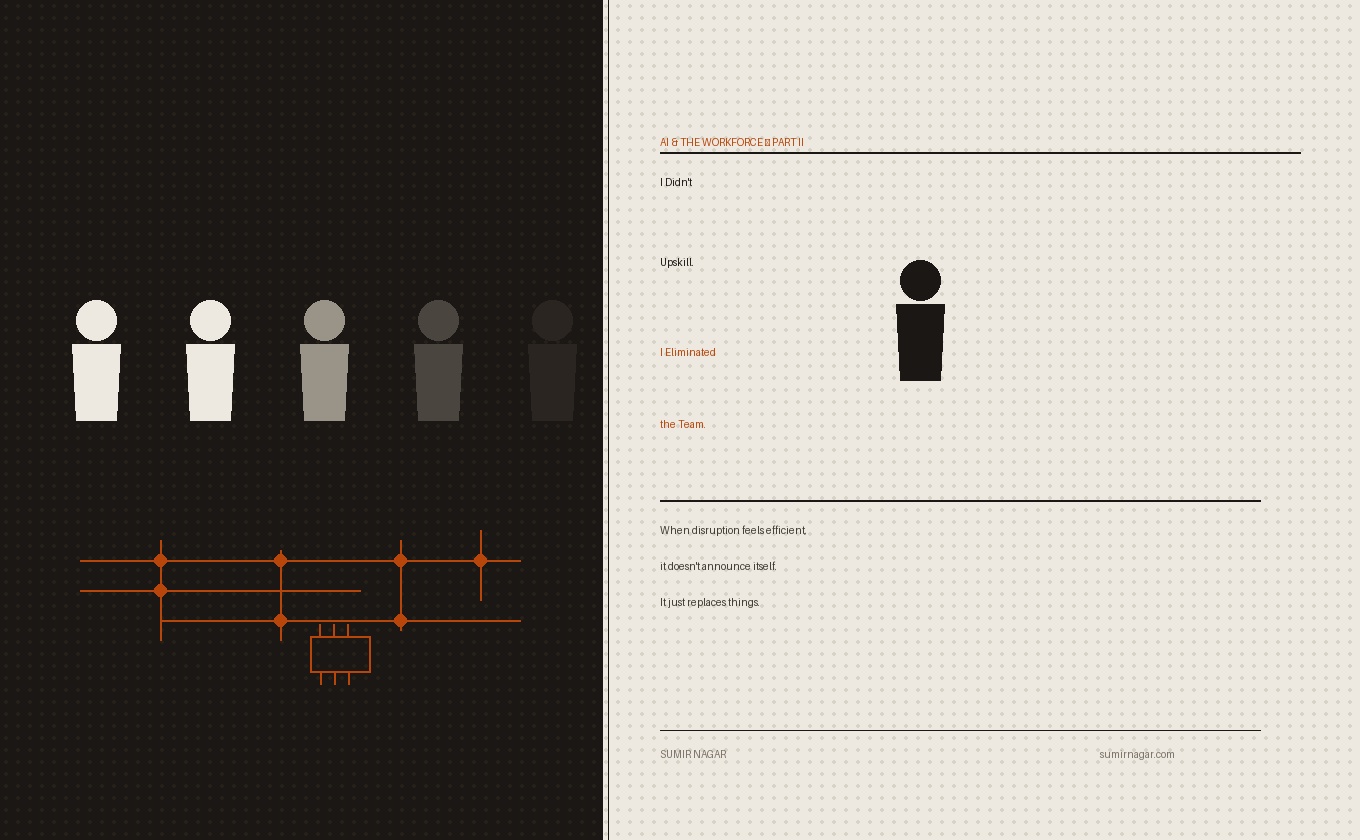

I Didn’t Upskill.

I Eliminated the Team.

A few months ago, I argued that reskilling was an illusion. Then I accidentally proved it — by building something alone in three days that should have taken a team a month.

A few months ago, I wrote The Illusion of Reskilling and the Coming Crisis — an essay arguing that the world’s faith in reskilling as the answer to AI disruption was misplaced. That it was a story we were telling ourselves to avoid the harder conversation.

The essay was well-reasoned. Structured. Data-backed. It made people nod, share it on LinkedIn, and then return to the comforting belief that technology creates as many jobs as it destroys.

Then I tried to build something. And the argument I had made from the outside suddenly landed on me — from the inside.

This is that story. Read the first essay if you haven’t. This one picks up where theory ends and reality begins.

This Wasn’t an Experiment. It Was a Problem.

I wasn’t trying to prove anything. I wasn’t running a controlled test on AI productivity or staging a demonstration of what’s possible. I had a real business problem.

The corporate training market is enormous, crowded, and — if we’re being honest — deeply boring. Everyone has content. Everyone has a framework. Everyone has a deck that promises transformation in 90 minutes and reflection in the final 10. If you’ve seen one leadership workshop, you’ve seen the architecture of most.

So the real question wasn’t what to teach. It was how to make what I was building impossible to walk away from. That meant one thing: not another workshop. An experience. Something immersive, interactive, gamified — the kind of thing that doesn’t politely inform, but actually unsettles people in the right places.

This is where things should have slowed down. Because building something like that takes time. It takes people. It takes process.

What It Was Supposed to Take

I know how this works. Three decades across financial services, fintech, and large-scale operations will do that to you. You develop a reliable internal estimate for what things cost — in time, in people, in cognitive overhead.

A working application of this kind — functional, polished, ready for real users — would require a team of four developers. Several weeks, possibly a month. That’s before you factor in the ritual: the definition documents, the scope debates, the estimate revisions, the testing cycles, the fixes that generate new issues, and the meetings that begin with clarity and end with “let’s revisit this.”

That’s not dysfunction. That’s how building things works.

Or rather — that’s how it worked.

What Actually Happened

I built it in three days.

Not a rough sketch. Not a prototype held together with assumptions and hope. A working application — functional, tested, and ready to put in front of people.

And I built it alone.

I built it with Claude. And the moment I say that, there is a version of this story that wants to flatten what happened into something palatable: “AI helped me work faster.”

That’s not what this was.

The Part Nobody Is Comfortable Saying

What I experienced wasn’t speed. It was something more disorienting than speed.

It was control. Total, frictionless, unmediated control.

No back-and-forth on requirements. No alignment conversations that slowly become philosophical discussions about what we’re really building. No “let’s sync” that quietly becomes the work itself. No reframing the brief for the third time to someone who has technically understood it but somehow not quite.

I would think something — and it would get built. Immediately.

But it went further than that. Claude didn’t just assist with execution. It functioned like an entire delivery operation that had decided to skip the human overhead entirely:

- Built the application

- Tested it

- Generated the Functional Specification Document

- Created the Technical Design Document

- Wrote the test cases

- Set up the test environment

- Identified failure points

- Fixed them

No knowledge transfer. No context-setting. No explaining the same thing in three different ways to three different people.

Just intent — and output.

What Disappeared Wasn’t Just Headcount

At first reading, this looks like a story about developers. It isn’t.

What disappeared wasn’t execution capacity. Execution capacity is actually the easy part — the part organisations have been automating in various ways for years.

What disappeared was everything around execution. The explanations. The co-ordination. The translation of intent into something other people can act on. The negotiation between what was meant and what was heard. The management of the gap between vision and interpretation.

And that is where the majority of modern professional work actually lives. Not in doing things. In aligning people who are doing things.

That layer — the coordination layer — is the invisible infrastructure of most organisations. It is also, it turns out, deeply automatable.

My Earlier Argument — Lived, Not Argued

In The Illusion of Reskilling and the Coming Crisis, I argued that reskilling — the story we tell ourselves about navigating AI disruption — is largely a mirage. That it assumes a linearity that doesn’t exist: workers get displaced, workers learn new skills, workers re-enter the economy at a comparable level. Clean, logical, reassuring.

At the time, that was an argument.

Now it feels — insufficient.

Because the scenario I’m describing isn’t reskilling’s failure. It isn’t even its opposite. It’s something the reskilling debate hasn’t properly accounted for: the individual who doesn’t need to reskill because the team they would have assembled has simply become unnecessary.

I didn’t move into a new role. I didn’t transition. I didn’t acquire a new skill set and graduate into a different category of work. I compressed an entire function into one person with an AI partner — and built the thing.

The Subtler Risk: When Better Output Hides Shallower Thinking

There’s a layer to this that I want to handle carefully, because it cuts both ways.

Working with AI makes you feel sharper. More articulate. More structured. Your output improves — often dramatically. The quality of what you produce begins to exceed what you could have produced independently, and it happens fast enough that you mostly feel upgraded.

But there is a risk that tends to arrive quietly, and it’s worth naming.

When something else is doing a significant portion of the thinking, your own depth can erode without announcing itself. You begin to operate at the level of prompts rather than principles. You get better at directing intelligence you don’t fully understand than at developing the understanding itself.

This doesn’t make AI dangerous to use. It makes it worth using with a particular kind of attention. The people who will navigate this well are those who use AI to amplify genuine depth, not substitute for its development.

This Isn’t a One-Off. It’s a Preview.

I understand the temptation to treat what I’ve described as a niche case. A specific convergence of circumstances: a technically sophisticated user, a particular kind of task, a particular AI tool at a particular moment in its development.

That reading would be comforting.

It would also be wrong.

What I experienced isn’t exceptional. It’s early. And early is precisely the moment when the pattern is easiest to dismiss — and therefore the most important moment to look at clearly.

Shifts of this kind don’t announce themselves. They begin quietly in edge cases, in individual decisions, in efficiency gains that don’t make it into board reports. And then, at some point, the accumulated quiet becomes the new normal — and the debate about whether it was happening becomes a debate about what to do now that it has.

The Structural Question We Are Choosing to Avoid

The implications extend well beyond the workforce debate.

If fewer people are needed to produce the same — or more — output, then fewer people earn. And if fewer people earn, fewer people spend. And if fewer people spend, the economic logic that underlies our current model of growth begins to strain in ways that reskilling programs and retraining budgets are not designed to address.

We are entering a phase where productivity and employment may no longer move together. Where the gains from AI are real, measurable, and concentrated — while the displacement is diffuse, slow, and easy to attribute to other causes until it isn’t.

At that point, this stops being a workforce story. It becomes a structural one. And structural problems resist individual solutions, no matter how well-designed those solutions are.

The question worth asking — and worth sitting with — is not how do we help people adapt? That question, while important, keeps the frame intact. The harder question is: what are we actually building towards, and for whom?

The Line We Haven’t Crossed Yet — But Will

When I first wrote about the illusion of reskilling, it was a case built from data, from economic modelling, from the observable failures of transition programs. It was an argument I believed, in the way we believe things we haven’t yet had occasion to test against our own experience.

Now it’s something I’ve lived.

I didn’t upskill. I didn’t reinvent myself into a new category. I didn’t transition — gracefully or otherwise — into an AI-adjacent role that didn’t exist before.

I built something that required a team. And I did it without one.

The conversation about reskilling — while necessary — is already behind the curve. We need to be asking a different set of questions. Not about how people adapt to AI, but about what kind of economy, what kind of society, we intend to build in a world where the cost of human collaboration is increasingly optional.

That conversation requires honesty that is currently in short supply.

Where Do You Go From Here?

If you haven’t read the first essay — The Illusion of Reskilling and the Coming Crisis — go back and read it now. Then return to this one. The two pieces are meant to work together: the first lays the argument; this one lives inside it.

Then try building something end-to-end. Not a prompt. Not a paragraph. Something that should have required other people. See what happens. Because the shift isn’t in what AI can do. It’s in what it quietly makes unnecessary — and how quickly you stop noticing.

Read Part I →

Leave a Reply